- Braun & Brains

- Posts

- How to Tell If You're Hot, According to Science

How to Tell If You're Hot, According to Science

PLUS: Anthropic co-founders to donate 80% of their wealth, TikTokers building cyberdecks with Raspberry Pis, and Pokémon Pokopia.

Hello world,

Everything I have learned about looksmaxxing I have learned against my will. Over the past few years, the topic that once lived in the depths of Reddit has found social media virality with the help of young men doing whatever it takes to get more attractive. In case you’ve been living under a rock, looksmaxxing is the practice of optimizing your appearance, including softmaxxing (gym workouts, skincare routines, better sleep) and hardmaxxing (unregulated peptides, growth hormones, bone smashing, and jaw surgery). Young women have been doing some pretty insane stuff for a long time to get as hot as possible and publicly sharing their pursuits, and now it feels like young men are starting to catch up.

Welcome to The Glow-Up Industrial Complex.

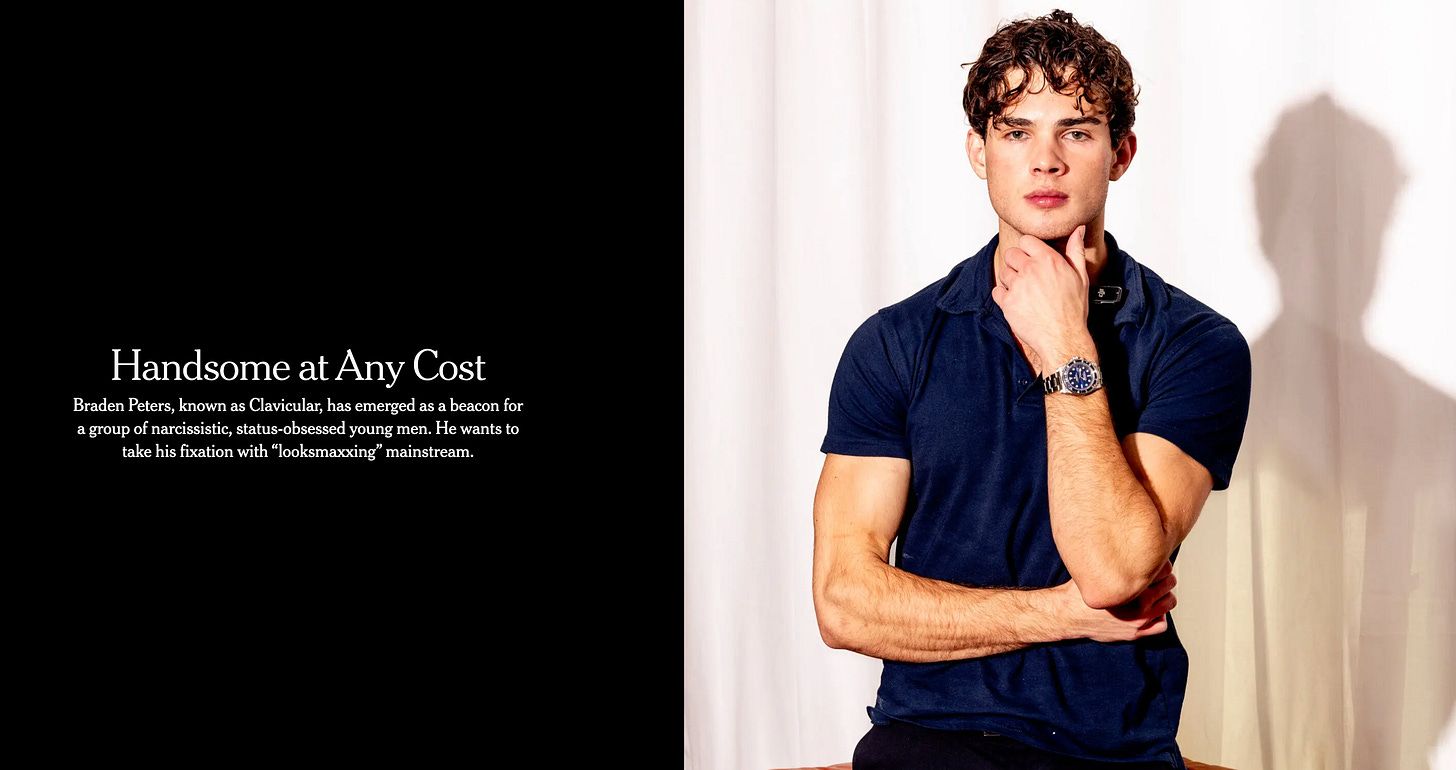

The biggest name in the space right now is Clavicular, a 20-year-old streamer profiled by the New York Times in February 2026. He makes over $100K a month, has 10K concurrent viewers, and started taking testosterone at 14. He ordered steroids to a PO box when his parents threw out his first stash. He smashed his own jaw to reshape it. He sees every attempt to be more attractive as a rational one. Even if it’s literally hitting himself in the face with a hammer.

Fun fact, he is from Hoboken. And was homeschooled. (NYT)

That psychology, the idea that your face is a problem with a solution, is exactly what Qoves is selling.

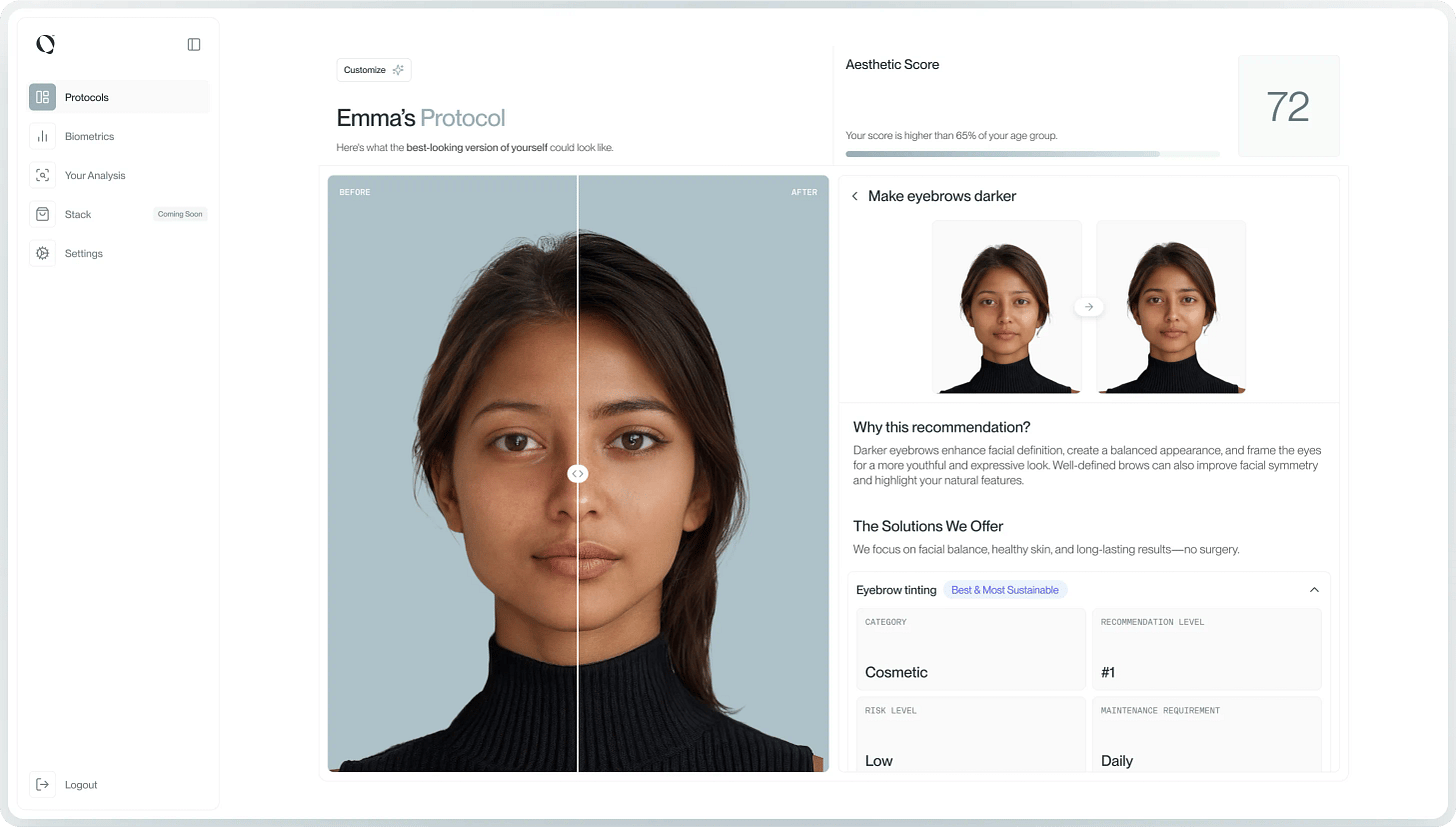

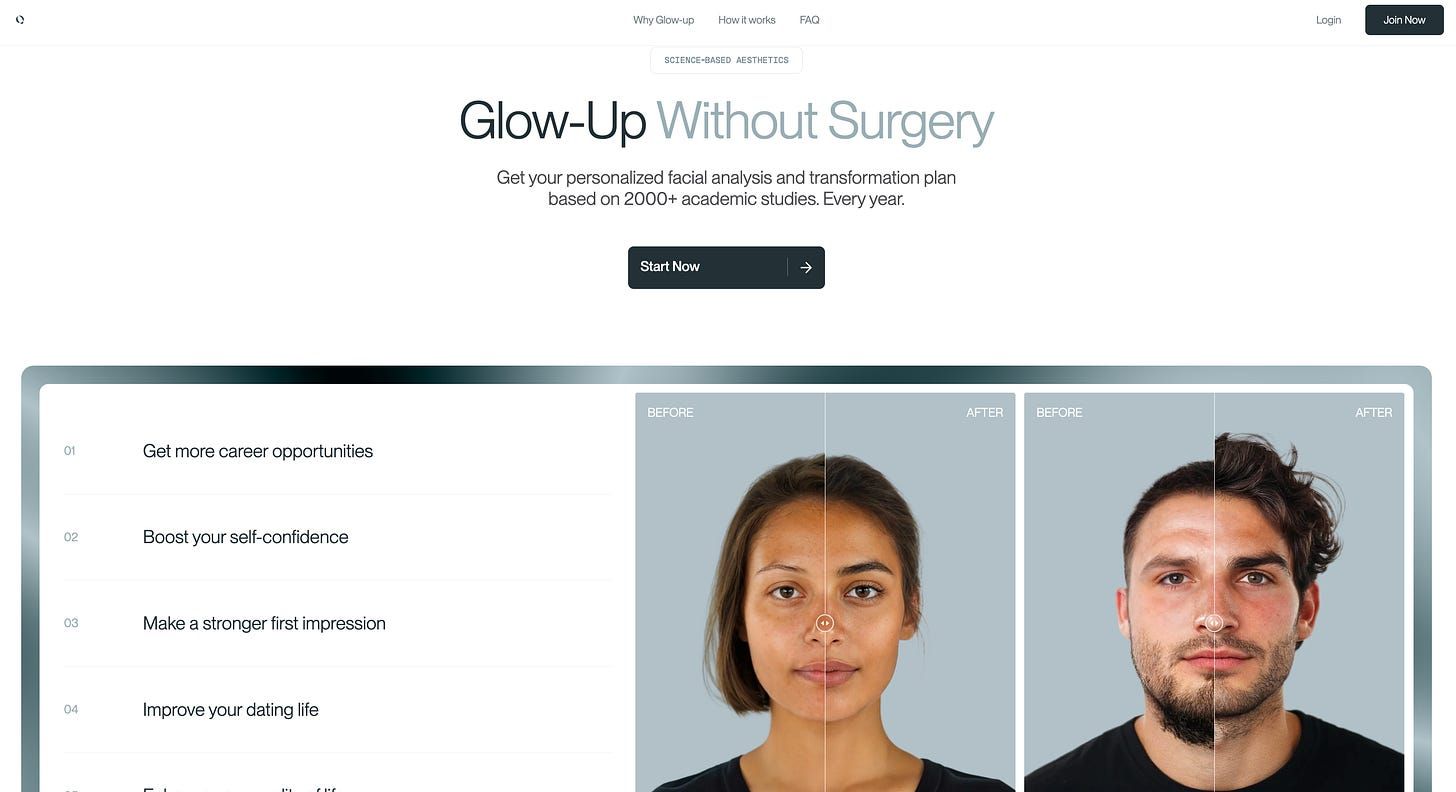

Qoves is a $150/year facial analysis service that uses AI and academic research to evaluate your face and build a personalized glow-up plan, without surgery. You upload photos, answer a few questions, and get back a detailed breakdown of your features, biometric scores, and a step-by-step protocol covering grooming, skincare, and styling. The pitch is improving overall facial harmony rather than fixating on one feature, with before-and-after simulations and progress tracking over time.

From qoves.com

They are commodifying the very skills your openly judgmental aunt has perfected.

They also have nearly 1M YouTube subscribers and 679 videos with titles like “The Top 10 Facial Features For An Attractive Face” and “What Model Scouts ACTUALLY Look For,” pulling 2-3M views each. It almost feels like a masterclass guru scheme where the channel tells you what’s wrong and then sells the vulnerable a magical subscription to fix all of their problems.

By the time someone is uploading their photos, they have already been told, repeatedly and in detail by the company, what an attractive face looks like and how far theirs might be from it.

The scroll-stopping post that brought it all to my attention this week was a TikTok user called @itsanamcara who actually spent her hard-earned cash on it. Her original photo scored 49/100 on their Aesthetic score. At the bottom of the image, a disclaimer states the number reflects “how closely your current features align with your personal aesthetic potential, based on non-surgical factors. It is a measure of potential, not a judgment of attractiveness.” I find her to be a pretty girl and I hate the idea of her being boiled down to a number. She’s not a 49. She is a human.

@itsanamcara #looksmax #qoves #beforeandafter

A score is a score. Calling it aesthetic potential instead of attractiveness doesn’t change what happens in your brain when you see 49 out of 100 next to your face. The disclaimer is corporate language trying to outrun a number.

Screenshot from the TikTok

Her video also reminded me of other videos and posts that trended in the past, where users share photos and videos of themselves and ask strangers on the internet how they can become more attractive. I have thick skin, but it still seems like a twisted humiliation ritual to me.

Qoves cites 2K+ academic studies, but every one of them proves that attractiveness bias exists in the world, not that Qoves can measure or improve it. The research establishes the problem. It does not validate the solution. Back in 2021, MIT Technology Review wrote about Qoves and found that the system trains on photos already manually scored by humans, meaning it learns to replicate human bias, not some objective standard of beauty. A Cornell computer vision professor they spoke to admitted that even researchers don’t fully understand how these systems work. That same piece found that beauty AI systems consistently encode Eurocentric bias, ranking darker-skinned women as less attractive and rewarding faces with European features regardless of the subject’s background.

Qoves says it accounts for ethnicity and cultural beauty standards. A University of Maryland researcher quoted in that piece said she has never seen a culturally sensitive beauty AI.

I’m not saying all pursuits of self improvement are bad and I’m not ignorant to the fact that things like pretty privilege exist. I’m a young, thin, able-bodied white woman. When you google pretty privilege, someone that resembles me, plus a few inches added in height, probably shows up on the first page. I get that it’s a thing and we live in a society.

However, I also think we’re all thinking about ourselves too much. Doing all of this not only puts such a damper on your mental health, but makes you a more judgmental person towards others, which is just pushing the looksmaxxing agenda. That’s what the big looksmaxxers want. Don’t let them win. Take the time you’re spending on trying to perfect your face and read a book. Go to a pottery class. Talk with friends. Get out there. No one belongs in a constant state of panic about how they look. Take a deep breath and do not spend $150 for a computer to tell you how hot you are.

A classic reminder from Jemima Kirke.

Family Matters

Get your kids off looksmaxxing on social media and get them a Rasberry Pi. I’ve been seeing very cool girls on TikTok build Cyberdecks, custom portable computers built with Raspberry Pi boards and 3D-printed cases. The builds typically feature dual screens and modular components, used for field work, hardware testing, and coding on the go. It’s definitely influenced me to get my hands on a Raspberry Pi to tinker with the next time I have a free weekend. (TikTok)

@ubeboobey on TikTok

WhatsApp is rolling out parent-managed accounts for kids under 13 with restricted features: no Meta AI, no Channels, no disappearing messages, and messages from unknown contacts appear blurred. Parents control a PIN for approving group invites and message requests, though they cannot read the child’s messages since end-to-end encryption is preserved. (TechCrunch)

Parents are finding plenty of uses for AI consumer agents despite VC skepticism about the category, listing tasks like scheduling, meal planning, and household coordination as areas where they want AI help. The trend suggests consumer AI demand may be stronger than investor sentiment indicates. (X Trending)

Not Great!

Atlassian is cutting 1.6K jobs, about 10% of its workforce, to “self-fund” investments in AI and enterprise sales. The restructuring will cost $225M to $236M in severance and related charges, with North America seeing the largest share of cuts. Shares rose nearly 2% after the announcement. (CNBC)

Journalist Julia Angwin filed a class-action lawsuit against Grammarly, alleging the company used real journalists’, authors’, and academics’ identities in its AI “Expert Review” feature without consent. The feature generated writing feedback styled after specific named experts, including at least one who had recently died. Grammarly has since disabled it. (Wired)

Meta is delaying its new AI model “Avocado” from March to at least May after it fell short in internal tests for reasoning, coding, and writing compared to models from Google, OpenAI, and Anthropic. Meta’s AI team has discussed temporarily using Google’s Gemini models for its products while Avocado catches up. (New York Times)

Binance

The DOJ is investigating whether Iran used Binance to move over $1B to entities supporting Iran-backed groups, including Yemen’s Houthi militants. Binance sued the WSJ over the reporting and claims its sanctions-related exposure dropped 96.8% since January 2024. The exchange previously paid $4.3B in penalties in 2023 for anti-money laundering and sanctions violations. (Wall Street Journal)

Mastercard launched a Crypto Partner Program bringing together 85+ companies, including Binance, PayPal, Ripple, Circle, and Gemini, to connect blockchain-based systems with traditional payment rails. The initiative focuses on cross-border transfers, B2B payments, and global payouts, signaling a shift from experimental pilots toward mainstream deployment. (CoinDesk)

Money Moves

Nintendo shares rose ~15% over two days after Pokemon Pokopia, a life-simulation game for the Switch 2, became a surprise hit with physical copies selling out at major U.S. retailers. The game scored 89 on Metacritic and offset investor concerns about rising memory costs that had dragged the stock down 30% since November. (Bloomberg)

Quince raised a $500M Series E led by Iconiq at a $10.1B valuation, more than doubling its $4.5B valuation from early 2025. The direct-to-consumer company, known for its $50 cashmere sweater, now sells apparel, home goods, beauty, and wellness products, with revenue surpassing $1B. (TechCrunch)

Anthropic

Anthropic’s Claude add-ins for Excel and PowerPoint now share a single conversation thread, letting the AI maintain context when switching between apps. The update introduces one-click “Skills” that let teams save and share proven workflows, with prebuilt templates for financial modeling, data cleaning, and deck building. Available to paid Claude plans on Mac and Windows as of March 11. (VentureBeat)

All seven Anthropic co-founders have pledged to donate 80% of their wealth, with the combined pledge worth roughly $37.8B if the company goes public at its current valuation. Dozens of other employees have also made similar commitments, and much of the funding is expected to flow to EA-aligned organizations; co-founder Daniela Amodei’s husband Holden Karnofsky co-founded GiveWell and Open Philanthropy. Dario Amodei, Amanda Askell, and Jack Clark have all signed the Giving What We Can Pledge. (Transformer News)

Anthropic created the “Anthropic Institute,” an internal research body led by co-founder Jack Clark, to study AI’s societal, economic, and safety implications. The institute merges three existing teams and has hired former Google DeepMind and OpenAI researchers. The launch comes as the company fights a Pentagon blacklist, with $100Ms to potentially $Bs in 2026 government revenue at risk. (The Verge)

Related: The New York Times Magazine spoke to 70+ software developers at Google, Amazon, Microsoft, and Apple about how AI is transforming programming. One developer described Claude Code doing the bulk of his work, finishing a customer project in 30 minutes. Many Silicon Valley programmers are now barely programming themselves, and the article frames their mix of exhilaration and anxiety as a preview for workers in other fields. (New York Times)

Reply